Hi Friends, in my previous Functional Coverage blog, I’ve shared high level idea & understanding about Coverage & types of Coverage i.e. Code Coverage & Functional Coverage with an example of Coverage data model i.e. cover groups.

If we want to understand about Coverage as a whole, there are different sections to know about, which are listed below:

Coverage Metrics

Functional Coverage Metrics

Code Coverage Metrics

Coverage Planning – Specification to Test plan

Coverage Modeling – Test plan to Functional Coverage Model

Functional Coverage Model Coding Standards – Coding for effective analysis & easy debugging

Coverage Reports Generation & Report Analysis

Since it will not be a very good idea to cover everything in a single post, in this post we’ll only focus on Coverage Metrics (particularly Functional Coverage Metrics) & will try to get information about it. Other sections will be covered in upcoming Functional Coverage posts in near future, so please tune in for those posts as well..

Ok, First I would like to share something said by legendary Peter Drucker who is known for developing Metric Driven Processes.

Whats get measured, gets done,

Whats get measured, gets improved,

Whats get measured, gets managed.

– Peter Drucker

Lets start discussing the Coverage Metrics as described above.

Coverage Metrics:

Whatever our simulation methodology is, directed testing approach or constrained random verification, following questions always pops-up to understand our verification progress –

Are all the design features and requirements identified in the testplan got verified?

Are some lines of the code or structure in the design model (e.g RTL Code) never covered?

“Coverage” is the Metric we use during simulation to answer these questions.

To comprehend the Coverage in proper way, we need to understand the concepts of Controllability and Observability. Lets talk about these concepts – Controllability refers to the ability to influence or activate an embedded finite state machine, structure, specific line of code, or behavior within the design by stimulating various input ports. On the other side, Observability refers to the ability to observe the effects of a specific internal finite state machine, structure, or stimulated line of code on the external ports. Testbench is going to have a low Observability in case it observe only the external ports of the design model because the internal signals/structures are indirectly hidden from outside.

To identify a design error using a simulation testbench approach, the following conditions must hold:

The Testbench must generate proper input stimulus to activate a design error.

The Testbench must generate proper input stimulus to propagate all effects resulting from the design error to an output port.

The Testbench must contain a monitor that can detect the design error that was first activated then propagated to a point for detection.

There might be scenarios where the design error got activated but in the absence of other supporting stimulus these design error are not able to propagate to the point of observation & such design errors or bugs keeps hidden in the design itself.

Lets understand this using a simple design structure shown in Figure 1 below:

Figure 1: Controllability & Observability

In the above figure we can see, With the help of input stimulus, design error (bug) is produced (red color) but it requires support from the SEL line to select this path so that this path is visible on the external ports. In the shown structure, currently SEL line is HIGH hence it is selecting the green channel, hence unless the SEL line is LOW the design error will keep hidden inside the design & will not be caught.

From the concept of Controllability and Observability is clear that we need to ensure that every line of code, internal structure of the design model must be exercised or activated due to the input stimulus. Here we can think of Code Coverage metrics could be the possible solution for it. But no single metric can be sufficient to ensure complete coverage in the verification process e.g. 100% code coverage during simulation regression does not ensure that 100% functionality is verified. The reason is that the code coverage does not measure the internal concurrent behavior of the design, and code coverage also does not measure the temporal relationship of the events with the design block or multiple design blocks. Similarly even if we achieve 100% Functional Coverage Metrics, there might be case where Code Coverage is 95%. Reason could be that developed Functional Coverage model is not complete and few items are being missed OR few features which were part of the initial design functional specification are coded in the design model (RTL code) but those features are not part of Coverage model.

Types of Coverage Metrics:

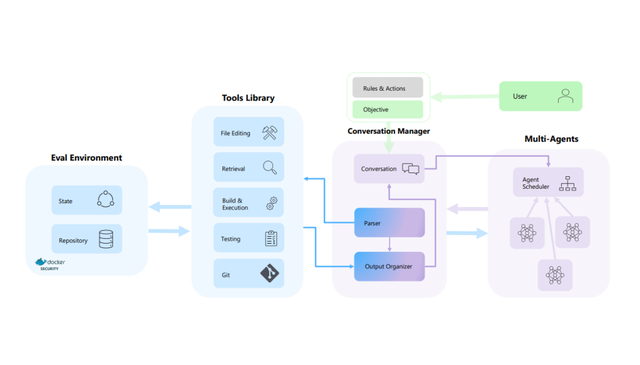

Primarily there are two most popular ways to classify the Coverage Metrics those are based on “Method of Creation” and “Origin of Source“. Lets see the diagram 1 below and then I’ll elaborate them in more detail:

Diagram 1: Different Types of Coverage Metrics

So, here lets first classify “Method of Creation” – which means how do we implement the coverage metrics. There are two ways which are called Implicit way and Explicit way. Implicit way implements the coverage automatically by the EDA tool algorithms. Yet on the other side, in Explicit way users define & implement the coverage metrics with their own intelligence and knowledge.

Next method is “Source of Origin“, here also there are two ways which are called Implementation based and Specification based. In Implementation source, RTL code (design model) acts as the source of information and in Specification source, design functional specification works as the source of origin and user manually extracts the features and properties of the design to be verified and capture those into some form of model (one of the approach is to develop functional coverage model). When we see possible matrix combinations of these 2 approaches, it brings out the Diagram 1 shown above. In this diagram we see that following:

Implicit + Implementation => EDA tool driven (implicit), Implementation (using command line switches) produce Code Coverage

Explicit + Specification => User extracted features from the Design Functional Specification and implemented in form of Functional Coverage Model

Explicit + Implementation => Instrumentation created by the user that is based on the behavior encapsulated by the

design implementation (User hand coded Assertions)Implicit + Specification => Automatically extracted by a tool, and are derived from the design specification (An area of academic research)

There are two primary forms of coverage metrics in production use in industry today and these are:

Code Coverage Metrics (Implicit coverage)

Functional Coverage/Assertion Coverage Metrics (Explicit coverage)

I’ll not cover Code Coverage Metrics in this post. May be sometime in future. I believe many of you are already familiar with Code Coverage & if not, there are plenty of resources available out there, please refer those.

Lets discuss about Functional Coverage Metrics and Assertion Coverage Metrics both of which are types of Explicit Coverage.

Functional Coverage Metrics:

The objective of the Functional Coverage Metrics is to measure the verification progress with respect to functional requirements of the design. In another way, Functional Coverage helps us to answer:

Have all the identified and specified functional requirements are implemented?

Have all the implemented features are being tested?

Functional Coverage has been very instrumental with constrained random verification (CRV) methodology because as we know that in constrained random approach a huge stimulus space is covered and to identify what is covered and what not is a tedious task if done manually via debugging waveform or text reports. Functional Coverage provides a framework which is created to access the results with in the defined boundaries of expectations.

Additionally, Functional Coverage is also very helpful in mapping the verification progress in terms of covering the design functional specification i.e. how much spec is completed/covered at a particular time. How it happens – With the help of EDA tool, every section of the functional specification is mapped with a functional coverage group/point and after simulation the generated functional coverage results automatically back annotated in the verification plan to indicate how much of the specification document is covered by the simulation regression.

Since functional coverage is not an implicit coverage metric, it can not be extracted automatically. Hence, this requires user to manually create the functional coverage model. From a top-level, following steps are required to build a functional coverage model:

Identify the functionality/design intent/features that we want to measure

Implement the machinery to measure the functionality or design intent identified in point 1.

Note: Since the functional coverage must be manually created, there is always a risk that some functionality that was specified is missing in the coverage model.

Functional Coverage Metrics Types:

The functional behavior of any design, at least as observed from any interface within the verification environment, consists of both data and temporal (time domain events behavior) components. Hence, from a high-level, there are two main types of functional coverage measurement we need to consider: Cover Groups and Cover Properties.

Lets know about these two functional coverage measurements:

Functional Data Coverage:

With respect to functional coverage, the sampling of the state values within a design model or on an interface is probably the easiest to understand. We refer to this form of functional coverage as cover group modeling. It consists of state values

observed on buses, grouping of interface control signals, as well as register. The point to note is that the value being measured are sampled at a particular time explicitly or implicitly. SystemVerilog “covergroups” construct are part of the machinery we use to build the functional data coverage model.

Functional Temporal Coverage:

With respect to functional coverage, temporal relationships between sequences of events are probably the hardest to

reason about. However, ensuring that these sequences of events are properly tested is important. We use cover property

modeling to measure temporal relationships between sequences of events. Probably the most popular example of cover

properties involves the handshaking sequence between control signals on a bus protocol. Other examples include power-state transition coverage associated with verifying a low-power design. SVA “Cover property” construct are part of the machinery we use to build the functional temporal coverage model. Cover properties are usually implemented with Assertions.

Conclusion:

Friends, With that I would like to conclude this post here. So as a recap, in this post we covered Coverage different areas, about Coverage Metrics, Controllability & Observability, Type of Coverage Metrics, About Functional Coverage Metrics and type of Functional Coverage Metrics & finally briefly about cover groups and cover properties.

Since Functional Coverage is a vast topic, I would like to cover other topics like Functional Coverage model implementation, coding for better analysis & easy debug, Steps for Functional Coverage report generation and report analysis separately in other upcoming post.

Bạn Có Đam Mê Với Vi Mạch hay Nhúng - Bạn Muốn Trau Dồi Thêm Kĩ Năng

Mong Muốn Có Thêm Cơ Hội Trong Công Việc

Và Trở Thành Một Người Có Giá Trị Hơn